AI in California middle schools: who benefits, who gets stuck?

AI in – From AI-graded exit tickets to blocked access and teacher training, California middle schools are rapidly testing classroom AI. The results show big learning potential—plus confusion, uneven fairness, and cheating challenges.

California’s middle schools are becoming the test labs for classroom AI—where teachers want smarter feedback and students want clarity, not confusion.

At South Lake Middle School in Irvine. eighth graders hand in monthly math exit tickets to “Snorkl. ” an artificial intelligence program that grades responses and delivers instant feedback.. The setup is simple: students type or speak their answers. receive results quickly. and can retake quizzes until they meet an acceptable score.. The intent is not just speed, but forcing students to show their thinking.. In the words of the teacher overseeing the approach. AI works best when it pushes students to explain how they arrived at an answer—not when it becomes a shortcut.

The promise is easy to understand.. Personalized feedback can help students catch mistakes earlier. and repeated practice can reduce the fear that one wrong attempt defines their learning.. Yet the classroom reality is messier.. Teachers have to adjust grading criteria when AI misreads the meaning of an explanation. and they often end up reviewing submissions to ensure fairness.. One student lost a point despite the answer being correct after a wording change—an error the teacher caught only after the student questioned the feedback.. Situations like that are pushing AI integration from “tech trial” toward “human-in-the-loop. ” where educators remain accountable for what students are scored on and why.

Why middle school is the current battleground is also tied to behavior and development.. Children at this age are old enough to use AI tools in meaningful ways. but young enough that the emotional weight of feedback can land differently than it does for older students.. A RAND survey found that a large share of U.S.. middle schoolers say they use AI for schoolwork. which means schools are no longer deciding whether AI will appear in students’ lives.. They are deciding whether it will be guided, assessed, and taught.

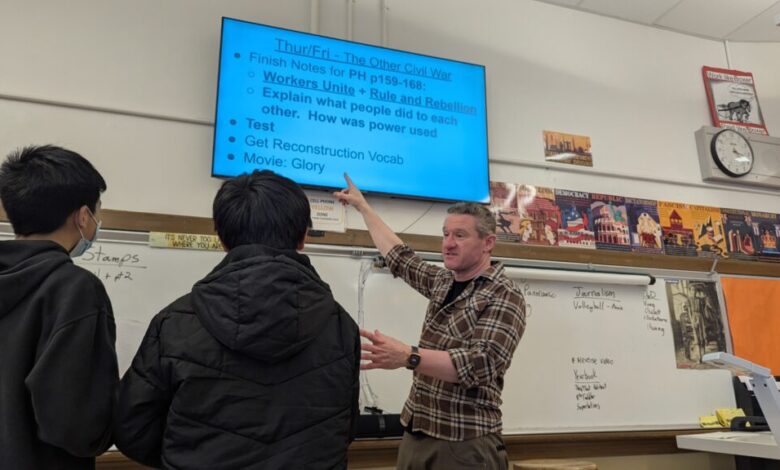

Not every classroom is rushing to the same pace.. At Marina Middle School in San Francisco, social studies and journalism teacher Matthew Helmenstine takes a different approach.. He initially limits AI exposure through school-provided Chromebooks that block access. partly because he wants students to build their own baseline skills first.. But the restrictions can’t cover everyone equally when devices are limited. and by the end of the school year he loosens the rules so students can experiment—especially on their phones.

That experiment has produced mixed reactions, and they don’t always line up with what adults might expect.. Students reportedly reacted furiously when shown a history video they later learned was AI-generated. seeing it as “baloney.” Yet they enjoyed an AI-animated Chinese New Year video made for a school assembly. suggesting that familiarity. purpose. and presentation shape acceptance.. When Helmenstine discusses language models like ChatGPT. students treat it like a taboo topic—while AI art feels more like a novelty.. He believes the split is rooted in unfamiliarity and uneven understanding. and he worries about what happens when AI tools begin to replace skills like vocabulary-building and independent expression.

Cheating is another pressure point turning AI from a learning tool into a disciplinary test.. At Marina. teacher Xilong “Benson” Li says he has noticed an increase in students submitting AI-generated assignments as their own work.. Without a detailed schoolwide AI policy. he relies on personal expectations and direct consequences. including assigning zeros when AI authorship is suspected.. His concern is not that students will ever be tempted—he expects they are already exposed to AI outside school—but that using AI word-for-word can become a crutch.. The challenge for schools is clear: how to distinguish between permitted assistance and dishonest substitution without punishing students who are still learning how to use tools responsibly.

Across California, these classroom experiences are driving a shift toward more structured implementation.. Some districts are treating AI the way they would treat any high-impact educational intervention: with pilots, monitoring, and stopping rules.. At KIPP Public Schools Northern California. educator Jennie Dougherty describes a “micro-pilot” approach—test an AI tool with a limited group. track whether students become more confused. whether teachers spend more time managing technology than teaching. and whether struggling students fall further behind.. If the early signals point the wrong direction, the program is stopped.

One pilot at KIPP—an AI tool designed to give immediate feedback on writing—illustrates why pacing matters.. Students reacted with distress after the first test, and it took staff time to understand why.. Dougherty eventually realized that humans process feedback at the speed of emotion.. Middle schoolers. still forming their academic identities. may experience rapid. repeated criticism as an overwhelming barrage rather than targeted learning support.. In response. the instructional framing changed: teachers reminded students that feedback isn’t a verdict on ability but a sign of growth.

This is the central lesson emerging from California’s middle school AI rollout: the technology is only half the story.. The other half is how feedback is delivered, how expectations are communicated, and whether students are guided to develop agency.. Dougherty puts the goal plainly—success isn’t whether students can use AI. but whether they leave school trusting themselves to navigate whatever comes next.

For families. the impact shows up in day-to-day questions: Will AI help a child who struggles. or will it widen gaps?. Will a student be scored fairly when wording differs from what an algorithm expects?. Will exposure build confidence, or push students to outsource their thinking?. For teachers. the stakes are professional and practical—creating rules for tool use. training colleagues. and managing the realities of device access and mixed home experiences.

Looking ahead. California’s middle schools appear to be moving toward a middle path: not banning AI outright. but neither treating it as neutral automation.. Instead, districts and educators are experimenting with guardrails—teacher training, iterative grading checks, feedback design, and monitored pilots.. The next phase will likely focus less on whether AI can operate in classrooms and more on whether it can support learning without undermining the skills schools are supposed to teach: clarity. critical thinking. and the ability to express original ideas.

“I Read It, But I Don’t Get It”: Rethinking Literacy for Multilingual Kids

Nearly half of high school students use AI for college search

COMMENTARY: Grad school becomes the new gap year in a shaky job market