RAG precision tuning can quietly cut retrieval accuracy by 40%

RAG precision – Precision-focused fine-tuning of RAG embeddings can reduce general retrieval accuracy sharply—especially in agentic systems where one mistake can cascade.

Fine-tuning RAG embedding models to “improve precision” may sound like an obvious win, but new findings reviewed by Misryoum suggest it can quietly make retrieval worse for the exact scenarios teams care about.

The core warning: training embeddings for compositional sensitivity—teaching the model to recognize near-identical sentences with opposite meaning—can degrade dense retrieval’s ability to generalize across broader. unseen topics.. Misryoum’s takeaway from the reported results is stark: performance can drop modestly on smaller models. and much more dramatically on a mid-size embedding model used in production environments. with regressions reaching up to around 40%.

That matters because retrieval quality is not just a “search relevance” metric in modern systems.. It’s the fuel for agentic pipelines. where an AI doesn’t simply return a document—it uses retrieved context as input to reasoning. planning. and action.. In a single-stage setup, a retrieval error can yield a wrong answer.. In an agentic workflow. the same error can cascade into downstream decisions. compounding risk in areas like customer support. compliance workflows. finance operations. or any application where incorrect context leads to costly next steps.

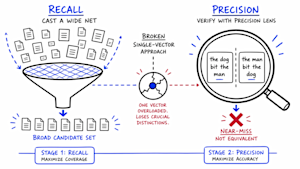

To understand why tuning can backfire, it helps to look at how dense retrieval works under the hood.. Embedding models compress each sentence into a point in a high-dimensional space.. At retrieval time, the system finds the nearest vectors to the user query.. This geometry is excellent for broad topical matching—documents about similar subjects tend to cluster together.. But it can also fail when meaning hinges on structure rather than topic.. Two sentences with almost the same words. such as a negation change or a role reversal. can land close to each other because the model “sees” content similarity more than it “understands” the compositional meaning.

The research described by Misryoum frames the tension as a representational tradeoff.. When teams fine-tune for sensitivity to structural differences—such as negation flips or “the dog bit the man” versus “the man bit the dog”—the model allocates its vector space to capture that verification-like signal.. The problem is that this competes with the original ability to generalize retrieval across wider domains.. Put simply: the training objective that improves one kind of discrimination can weaken the retrieval job the model was already performing.

A particularly practical complication for enterprise teams is that conventional tuning and evaluation can miss the damage.. Training metrics typically measure performance on the task used during fine-tuning, not the broader “retrieval across unrelated topics” capability.. Misryoum’s interpretive point for readers is that a model can score better on near-miss rejection during experiments while still regressing on general correctness once deployed—meaning the risk may only show up in production logs. not in the lab.. Even more troubling, some failure types appear harder to fix.. The findings reported that negation and spatial flip errors improve with structured training. while binding errors—cases where modifiers attach to the wrong entity or party—barely move.. That leaves a precision problem in exactly the places where humans expect the highest rigor.

When teams notice retrieval precision issues. their instinct is often to add layers on top of embeddings rather than rethink the pipeline.. Misryoum highlights that the reported analysis found several common “patches” can address different objectives while missing the structural failure mode.. Hybrid search. for example. improves precision when words are missing or phrased differently—but it cannot detect structural meaning reversals when both sentences share the same vocabulary.. MaxSim-style reranking can improve relevance benchmarks by

better aligning query terms to document terms. but it can still treat structural near-misses as nearly identical because it isn’t truly verifying compositional structure.. Cross-encoders can be accurate because they compare query and candidate at the token level. yet they may be too expensive to run at high query volumes in production settings.. And while contextual or agentic memory architectures are often described as the next step beyond RAG. the reported work suggests they still

depend on retrieval at query time—so the structural mismatch problem can persist even if latency requirements are more forgiving.

The most actionable insight in the findings reviewed by Misryoum is a shift in architecture rather than further tweaking.. The approach validated in the research separates “recall” from “precision” into two stages, assigning each stage a dedicated job.. Stage one keeps the familiar dense retrieval behavior: use embeddings to quickly cast a wide net and return strong candidates efficiently.

Stage two is the verification stage.. Instead of treating candidate ranking as a single similarity score. a smaller learned Transformer model examines the query and each candidate at the token level.. This is where structural mismatches—like negation flips or role reversals—can be detected more explicitly. because the model is asked to compare meaning-relevant patterns rather than rely entirely on vector proximity.. Misryoum’s emphasis here is on what the results implied: among the tested alternatives. the verifier-based two-stage design was the only one that reliably caught the structural near-miss failures that single-vector systems miss.

There’s no free lunch, though.. Misryoum readers should expect a latency tradeoff: adding verification costs time. and the cost scales with how many candidates get verified per query.. For precision-sensitive workloads—legal review. accounting checks. and other settings where a single wrong interpretation can cause real harm—full verification is more justifiable.. For general-purpose search, teams might run lighter verification, verifying fewer candidates to keep response times manageable.

This also reframes what “success” should mean for enterprise teams running semantic search or caching.. The reported work traces the investigation to a production issue in semantic caching systems: responses were fast but semantically incorrect because the retrieval layer treated meaning-reversing queries as identical.. That is a practical reminder for Misryoum’s audience: speed and apparent semantic similarity are not the same as correctness.

For teams planning their next iteration. Misryoum recommends a shift from “tuning for the metric we can measure easily” to “testing for what breaks in the wild.” A useful evaluation framework mentioned in the findings centers on three ideas: correctness (are we right?). completeness (are we missing key context?). and usefulness (does the output help the user or downstream system do the right thing?).. The key insight is that correctness failures often undermine the other two. turning a system that looks good on relevance benchmarks into something that is not actually reliable.

Finally, the findings do not argue that RAG is obsolete.. Misryoum’s reading is that the deeper message is about assumptions: RAG can be productionized easily. but teams should not assume a single-stage dense-retrieval pipeline with a fine-tuned embedding model is “good enough” for precision-sensitive tasks.. The two-stage verifier approach is not prohibitively complex, but it is a mitigation strategy with real performance tradeoffs—especially latency.

In the end. the most important enterprise takeaway is straightforward: if you fine-tune embeddings for precision without pressure-testing general retrieval. you may improve one slice of behavior while weakening the overall job.. In agentic systems, that difference can be the margin between reliable automation and costly errors.