GPU “Silicon Lottery” Reveals Performance Gaps

GPU performance – Misryoum reports that “identical” cloud GPUs can vary widely in AI performance, making benchmarking essential for renters.

A cloud GPU that looks “identical” on paper can still deliver a very different level of performance in practice, and Misryoum says that reality is increasingly difficult to ignore for anyone paying for AI compute.

Misryoum notes that this phenomenon is commonly referred to as the “silicon lottery”: even chips from the same GPU model can behave differently depending on factors tied to the manufacturing and the chip’s condition.. For customers renting GPU time. that variability can turn a straightforward purchase decision into a roll of the dice. especially when workloads are sensitive and margins are tight.

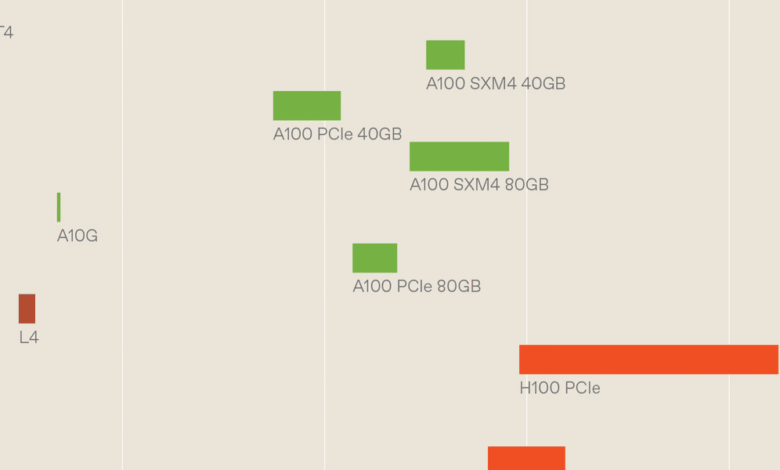

In this context, Misryoum points to research that examined how GPU performance changes across cloud environments.. The study involved thousands of GPU instances drawn from multiple cloud operators. testing a range of Nvidia models commonly used for AI workloads.. The goal was to capture a snapshot of how well these devices run large language model (LLM) tasks. focusing on compute behavior and memory throughput.

The results showed that performance and bandwidth varied not just across different GPU models. but also among chips that were labeled the same.. Misryoum reports that some of the widest gaps appeared even within specific model groups. with differences large enough to meaningfully affect how quickly an instance can process a workload.

Why this matters: if you assume a single GPU type always delivers a predictable outcome, variability can quietly undermine cost planning, throughput targets, and performance comparisons between vendors.

Misryoum says researchers attribute part of the effect to normal operational differences. including how hardware is cooled and how cloud providers configure systems.. But their analysis also suggests that the largest driver is likely the chips themselves. with manufacturing-related variation and device-specific characteristics playing a major role.

Meanwhile, for renters trying to avoid surprises, Misryoum emphasizes a practical takeaway: benchmark what you actually receive.. The research highlights the value of running a targeted performance test on the specific cloud instance before committing to a larger training or inference run. rather than relying solely on advertised specs or model names.

Misryoum’s final takeaway is straightforward: in today’s AI supply chain, “best-effort” compute is not always “best-effort” consistency. Measuring your instance directly is becoming less of a nice-to-have and more of a baseline step for anyone trying to get reliable performance from rented GPUs.