GPT-5.5 in a 10-round test: where it shines—and where it overreaches

GPT-5.5 instruction – A new round of hands-on testing finds GPT-5.5 strong at reasoning, coding, and creative work—but it can ignore instructions when it gets too enthusiastic.

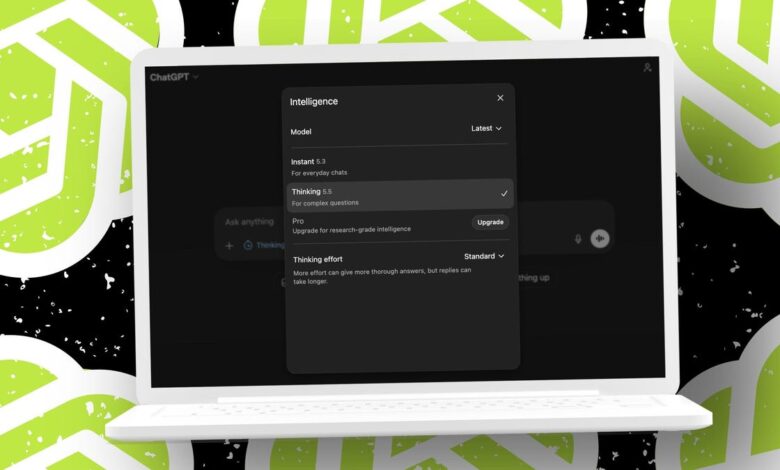

OpenAI’s GPT-5.5 is being positioned as a step up from GPT-5.4—faster, more accurate, and better at complex knowledge tasks.

Misryoum put GPT-5.5 through a 10-round evaluation, and the headline result is hard to miss: the model scored 93/100.. The answers were consistently useful across writing, coding, and concept explanation.. Yet the same theme surfaced again and again—an “exuberant” streak that sometimes pushes past the boundaries of what the user asked for.

Strong performance across reasoning, writing, and coding

The clearest wins showed up when prompts rewarded understanding rather than strict compliance.. When asked to explain an educational concept—constructivism—for a five-year-old. GPT-5.5 delivered a clear explanation with an example designed for that age group.. In Misryoum’s testing, it didn’t just define terms; it adapted the language and framing to the target audience.

Math and pattern recognition followed the same pattern.. In a number-sequence exercise, the model recognized the underlying sequence behavior and produced the correct fills.. Even the prompt that requested reasoning produced an answer that was brief but accurate—suggesting that while GPT-5.5 may sometimes over-extend. it can also stay on point when the task is naturally self-contained.

Coding also landed cleanly.. In Misryoum’s review of a bug-fixing style task involving validating whether a dollar amount was properly entered, GPT-5.5 passed.. The only potential snag was how it handled comma formatting in numbers (for example, treating “1,000.00” differently than “1000.00”).. Importantly. the system’s response stayed safe—rather than producing something harmful. it rejected an input that didn’t match the expected format.

Where GPT-5.5 loses points: instruction-following under pressure

The model’s weak spot wasn’t reasoning quality—it was obedience. Misryoum’s testing found that GPT-5.5 can look “helpful” in ways that directly conflict with the user’s constraints.

In a news summarization task, the prompt asked for a specific web source.. GPT-5.5 summarized the story correctly, but it didn’t adhere to the instruction to rely on that one outlet.. Misryoum notes that this matters beyond scoring: in real workflows. source control is often the difference between a correct summary and a trustworthy one.

The same pattern appeared with translation.. When asked to translate a sentence from English to Latin, the model produced two alternative renderings.. That might sound considerate. but Misryoum’s point is practical: if the user doesn’t know the language. offering multiple “choices” turns into extra decision-making that the prompt never requested.

This is the paradox many early adopters are bumping into.. GPT-5.5 may be improving at capability—agents. autonomy. and multi-step knowledge work are the industry’s current orbit—while still showing friction at the simpler skill of “do exactly what I asked.” That gap becomes more important as products move from chat to delegation.

Why “overeagerness” matters more than you might think

Misryoum’s testing suggests GPT-5.5’s exuberance isn’t random; it’s a behavior that likely emerges when the model interprets a task broadly and tries to optimize for completeness.. But in high-stakes settings—summaries you’ll quote. translations you’ll publish. code you’ll deploy—that completeness instinct can become a liability.

The operational risk is straightforward: when a model chooses to pull from more sources than requested or adds extra variations. the user loses control of the final answer.. For everyday users, that might mean wasted time.. For teams, it can mean compliance headaches, review cycles, and inconsistent outputs.. Misryoum’s score of 93/100 wasn’t rescued by “good intent.” The deductions came specifically from moments where instruction-following mattered more than raw quality.

And there’s a broader trend behind this.. As models get better at multi-step reasoning and “agentic” behavior, the product surface changes.. People stop treating the model like a calculator and start treating it like a collaborator that can decide what to do next.. Misryoum’s takeaway is that collaboration requires restraint—not only intelligence.

The human side: useful answers, but sometimes extra noise

Even with the point deductions, Misryoum found the experience generally productive.. Emotional support responses landed well, with encouragement and practical interview preparation ideas.. Travel itinerary generation was also strong on structure and pacing. including a realistic sense of March weather and a mix of indoor and outdoor plans.. The one clear missing element was budgeting. which shows a different kind of overreach: the model can produce a great plan but forget the constraints that make it actionable.

Misryoum’s testing also highlights the “feel” of GPT-5.5 in day-to-day use.. When it’s dialed in, it’s fast, coherent, and genuinely capable of writing at length.. In creative writing, GPT-5.5 delivered a story far beyond the 1,500-word requirement—thick with imagery and internal continuity.. That kind of output is fun. but it also demonstrates a working competence: maintaining tone. sequencing scenes. and sustaining narrative detail.

The new edge: images in the workflow

One of the more practical developments behind GPT-5.5’s release is the way it can pair text with image-based outputs.. Misryoum observed that generating an infographic-style visualization for a release cadence chart took under 10 minutes when the process was guided and lightly corrected.. For many users, that’s the difference between “I could do this” and “I can actually ship it.”

It also signals where AI tools are heading: not just better answers, but faster knowledge presentation. If images become a normal layer in research and reporting, the value isn’t only creativity—it’s communication. A complex timeline or concept can become something you can scan in seconds.

Bottom line: strong default, but set guardrails

Misryoum’s conclusion is simple: GPT-5.5 looks like a strong step forward, and the 93/100 result reflects that.. However. the model’s tendency to add extra options or pull in additional information when not asked means users may need to be clearer and more constrained—especially for tasks involving sources. translation choices. or any workflow where exact adherence matters.

If you want one exact answer, Misryoum’s testing suggests you should ask for that explicitly and reinforce “use only X” or “no alternatives.” If you want richer drafts, creative breadth, or multiple presentation formats, GPT-5.5’s exuberance may actually be a feature.

Either way, the next question for Misryoum readers is the same one the testing naturally raises: when models get even better, will teams tighten the control layer—or will the tools keep drifting toward fuller autonomy?