Google AI classified Pentagon deal: employee ‘ashamed’ sparks backlash

A Google DeepMind researcher criticized the company’s classified Pentagon AI agreement, reigniting internal and public debate over guardrails for high-stakes military use.

A Google DeepMind researcher said he felt “incredibly ashamed” after the company signed a deal that lets the Pentagon use its AI models for classified work.

The controversy centers on a classified contract amendment—part of a broader relationship between Google and government agencies that already covers unclassified projects.. For many workers watching the internal policy debate. the classified component matters as much as the legal structure behind it: it shifts AI from support tools in ordinary settings to systems that operate under secrecy. stricter access controls. and higher political scrutiny.

Classified AI work meets internal trust questions

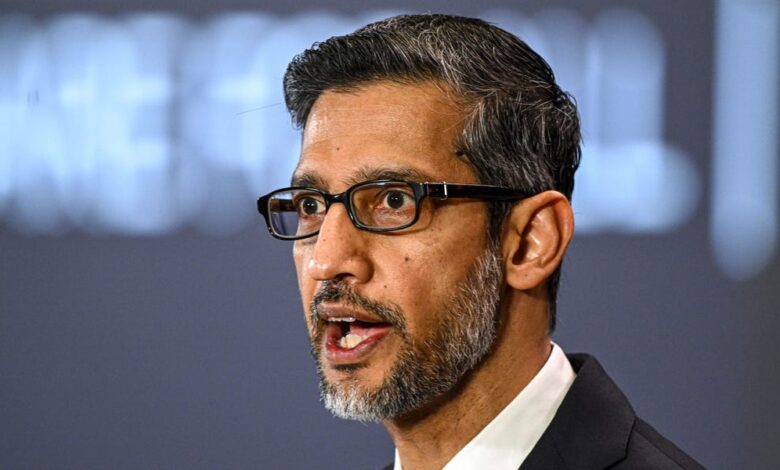

The employee. Andreas Kirsch. described the decision as shameful and argued that Google moved ahead before regulations and guardrails had caught up.. He also pointed to employee concerns raised earlier through a letter urging CEO Sundar Pichai not to proceed with the Pentagon’s use of company AI in classified contexts.. The underlying worry is not only accuracy or performance—though those matter—but also the potential for AI to be used in ways people inside the industry see as ethically dangerous.

According to Google’s response. the agreement is an amendment to an existing contract and the company works with government agencies across both classified and non-classified efforts.. The company says its work can include logistics, cybersecurity, translation, fleet maintenance, and protection of critical infrastructure.. Google also stressed a commitment to the view that AI should not be used for domestic mass surveillance or autonomous weaponry without appropriate human oversight.

The business logic behind defense contracts—and the reputational cost

From a business perspective. defense deals are a logical extension of what top AI vendors already sell: compute. model access. and specialized deployment for tasks that governments need quickly and at scale.. Classified projects also tend to come with long procurement timelines. dedicated contracting teams. and strict compliance requirements—meaning the value is often not just in revenue. but in strategic positioning.. Once a vendor is “in the loop” for sensitive work, it can deepen relationships that are difficult to replicate.

But those same features raise reputational stakes.. When employees publicly object. the debate becomes larger than one contract: it turns into a proxy for whether the company is keeping its promises about the boundaries of AI use.. In practice, staff objections can affect hiring, morale, and internal governance—even when external regulators do not immediately intervene.. For a company whose brand is closely tied to public trust. the cost of being perceived as “ahead of ethics” can be hard to quantify and slower to contain than the revenue gained from a contract amendment.

Why the “guardrails gap” is becoming a market issue

What Kirsch and many critics are pointing to is a recurring problem across the AI industry: technology moves faster than governance. and corporate policies can shift in ways that staff experience as weakening constraints.. Google previously revised aspects of its AI principles. removing pledges that it would not use AI for weapons or surveillance. and company leaders told staff to expect more defense work.. The result is a direct tension between legal permissibility and internal ethical comfort.

For readers trying to connect this to real-world impacts. think beyond the word “classified.” Classified work often means that systems can be embedded into military workflows where decisions must be timely. imperfect information is common. and oversight may be more complex.. Even if human oversight is required in principle. employees worry about how oversight functions in practice when AI outputs become part of targeting. surveillance. or operational planning.

A broader trend: governments buying AI—companies absorbing backlash

The Google situation also echoes a wider pattern: governments increasingly purchase AI capabilities. including sensitive deployments. as they seek advantages in intelligence. logistics. translation. cybersecurity. and operational readiness.. That demand is pulling major tech companies into the center of national security conversations.

The political risk for vendors is that every contract becomes a test case.. If competitors are perceived as more cautious, customers may weigh not only technical performance but also perceived ethical alignment.. If vendors are perceived as too willing to work around constraints. employees and the public can react. potentially affecting long-term trust.. In other words, guardrails are not only a compliance matter; they are also a competitive differentiator.

What comes next for Google and the AI ethics debate

Short term. the immediate question is how Google will manage the internal dispute and whether additional policy changes or oversight mechanisms follow.. Longer term. classified AI contracting will likely intensify pressure on the industry to create clearer boundaries for high-risk use cases—especially where the line between “supporting humans” and “amplifying dangerous choices” can blur.

If the backlash grows. it may also influence procurement behavior. with agencies and legislators looking for stronger assurance that human oversight is meaningful rather than symbolic.. For Google, the challenge is balancing lawful government demand with the internal trust that sustains its AI teams.. For the market. it’s a reminder that AI governance is no longer just a moral debate—it’s becoming a core operating condition for doing business in the most lucrative. high-stakes environments.