ChatGPT’s New Image Engine: Impressive Pictures, Big Knowledge Gaps

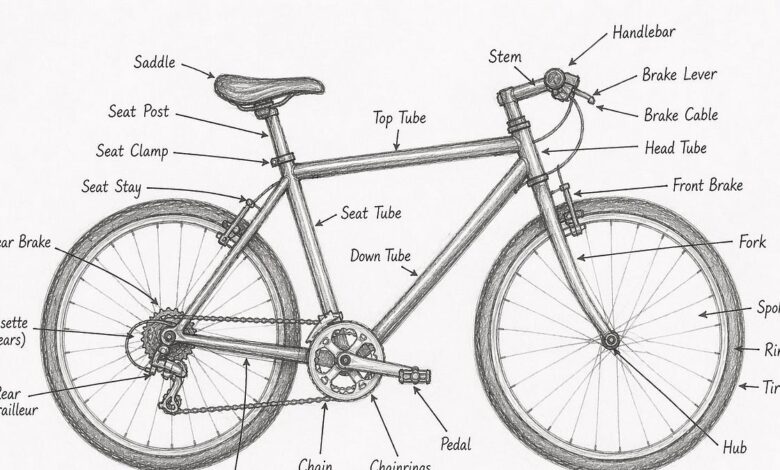

ChatGPT image – New image outputs from ChatGPT can look convincing, but examples involving bike diagrams show frequent labeling mistakes and weak real-world understanding.

ChatGPT’s “powerful new image engine” has sparked excitement online—especially when the results look polished at first glance.

But zoom in. and the story shifts from wow to “wait. that doesn’t make sense.” In a widely discussed demonstration. the system produced a labeled image of a bike that seemed to improve on earlier attempts.. The preview was striking enough to prompt reactions along the lines of “problem solved.” Yet the detailed labels told a different tale. revealing errors that are more than just cosmetic.

One of the most telling issues is mislabeling functional parts—like brake components—so that labels land in the wrong places or describe parts that aren’t actually where the picture implies they should be.. There’s also the problem of labels pointing to empty space.. On a casual scroll, these mistakes can slip by.. For anyone who knows how bikes are built and how they work, they don’t.. The diagram may be neat. but it behaves like a catalog with gaps: it can place text and shapes. yet struggle to map them to correct real-world relationships.

This isn’t just a critique of a single picture.. It reflects a broader challenge that keeps coming up with image generation tools: the difference between visual plausibility and functional understanding.. Modern systems can learn patterns from huge amounts of data, producing images that resemble what people expect to see.. But when asked to represent mechanisms—where placement, orientation, and purpose must all align—small inconsistencies become visible fast.. A bike isn’t just a “bike-shaped object.” It’s a system of interacting parts. each with a location and role.

That gap showed up even more clearly when the task became harder.. When asked to draw a taller-than-average tandem bike with a bike rack and panniers—an arrangement that’s not commonly found as a standard template—the output introduced a fresh set of strange label choices.. The result looked like it was assembling a scene from pieces. but not truly reasoning about what those pieces would do together.. For a bike enthusiast. some labeling choices conflict with how real tandems are typically configured. including how derailleurs. brake components. and rack-mounted elements are positioned.

There’s a human angle to this, too.. Image-generation excitement is fueled by the idea that these tools can help people explain, visualize, and learn.. When the visuals are wrong in ways that matter—especially in technical diagrams—it can mislead readers who don’t know enough to question them.. That’s not a minor inconvenience.. It turns an educational moment into a risk: someone may trust a diagram for a purchase decision. a repair plan. or a basic understanding of how the machine works.

Why the “errors” matter more than the first impression

Misryoum readers are often reminded that diagrams aren’t just images—they’re instructions.. When labels point to the wrong components. or combine elements from different designs (for example. mixing disc-brake expectations with caliper-style visuals). the tool stops being a helpful reference and starts acting like a confident guess.. The pattern suggests the image engine is strong at aesthetics and general layout, but weaker at domain-specific correctness.

The tandem test shows where pattern-matching runs out

When people talk about AI “understanding,” they usually mean more than recognizing objects.. They mean the ability to connect parts correctly: where components belong, what they connect to, and what they do.. For bikes, that’s especially strict.. A derailleur isn’t interchangeable with a rack; a rear brake isn’t a blank label waiting to be placed wherever space looks convenient.

What this means for the next wave of image tools

In the near term, the most practical approach is to treat generated diagrams as drafts, not references.. For everyday users, that means cross-checking details when the topic is mechanical, medical, safety-related, or otherwise domain-bound.. For developers and product teams. it means tightening the link between visual output and grounded knowledge—so labels aren’t just text overlaid on shapes. but correspond to the real-world structure those shapes represent.

And for those watching this space, the most telling signal isn’t whether the images look good—it’s whether the system can keep its promises when the test becomes specific.