Big G precision puzzle: NIST adds a new measurement

NIST has released a decade-long measurement effort for the gravitational constant. It doesn’t settle disagreements, but it strengthens the evidence base for why “Big G” is so hard to pin down.

“Big G” is supposed to be a simple number—but gravity refuses to be simple.

The gravitational constant. usually written as G and nicknamed “Big G. ” sets the scale for how strongly masses attract each other.. In practice, measuring it is difficult because gravity is extraordinarily weak.. Even in a lab. the gravitational pull from nearby objects—and especially Earth’s own field—creates a noisy background that can blur the signal researchers are trying to isolate.

That’s why precision metrology teams have spent centuries chasing a value for Big G that is consistent across experiments.. While other fundamental constants are pinned down with extraordinary accuracy. G remains a black sheep: different experiments have historically disagreed at a level of about one part in 10. 000.. The mismatch is small. but in precision science. small disagreements can be clues—either about hidden systematic errors or about limitations in how we measure the gravitational effect.

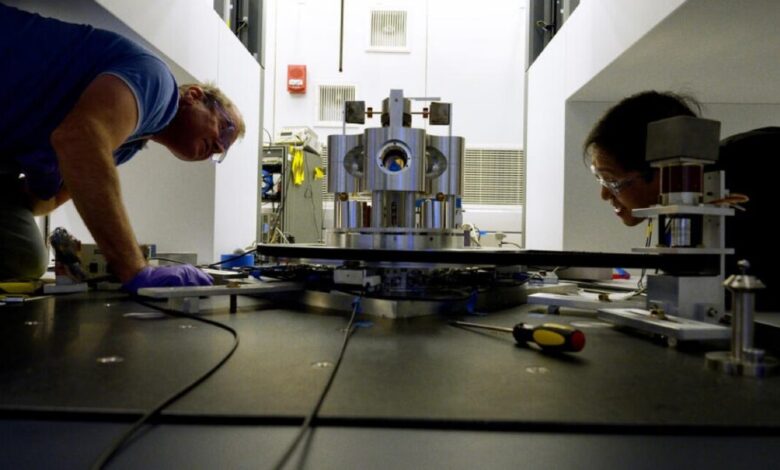

In the latest effort. scientists associated with Misryoum report that the National Institute of Standards and Technology (NIST) carried out a long-running project aimed at addressing a specific source of uncertainty: they replicated one of the more divergent recent results. working for roughly a decade to reproduce the experimental approach and re-check the assumptions behind it.. Misryoum notes that the team’s new findings. published in the journal Metrologia. don’t fully resolve the broader discrepancy in the reported values of Big G.. Still. the measurement matters because physics advances when the community can compare like with like and gradually narrow the space where the “wrong” answers might be hiding.

Misryoum frames the core challenge clearly: gravity’s weakness is exactly what makes it so hard to measure.. In an experiment, researchers have to detect incredibly tiny changes in force or acceleration.. But those tiny signals overlap with unwanted gravitational influences—sometimes from the Earth itself (often described as “little g”). sometimes from the environment. and sometimes from the equipment and its surroundings.. The result is a measurement that can be sensitive to factors that are not always obvious at first glance. such as alignment errors. mass distribution details. vibration. thermal drift. and how the experiment models the gravitational field.

This is where replication becomes more than just a bureaucratic step.. When experiments disagree. replicating a disputed approach is a way to test whether the difference comes from the method itself or from experiment-specific conditions.. Misryoum highlights that NIST’s decade of work functions as an additional “anchor point” in the evolving record of Big G measurements—useful even when it doesn’t produce the long-awaited single agreed value.

The story of Big G stretches back further than most people realize.. Newton’s law of universal gravitation, published in the late 17th century, provided the conceptual framework for what “G” represents.. But the constant’s modern notation came later. in the 1890s. after scientists began to formalize the idea of a single universal scaling factor.. Newton even considered ways to measure gravity indirectly with a pendulum, reasoning that the effect would be too small.. Later. the Royal Society set up efforts in the 18th century to estimate the density of the Earth as a proxy for determining the gravitational constant. drawing on variations of Newton’s pendulum concept.

What’s different today is not the basic question but the measurement philosophy.. Misryoum underscores that the quest for Big G sits at the intersection of fundamental physics and precision engineering.. The constant doesn’t just interest theoreticians; it’s a practical calibration target for how we interpret gravitational interactions—whether in experiments designed to probe gravity itself or in technologies that rely on mass and force measurements.. If G is measured inconsistently, it can ripple into error budgets where gravity enters indirectly.

Another subtle point is that the “signal-to-noise” problem doesn’t improve just because instruments get better.. Even if detectors become more sensitive. the experiment still has to contend with external gravitational contributions and systematic effects that behave like stubborn background terms.. That means the community must keep refining not only hardware, but also modeling choices and experimental control.. Misryoum’s emphasis on replication suggests that the next phase of progress may come less from one bold new design and more from systematically testing which setups truly isolate gravity’s contribution.

Looking ahead. the most likely path to convergence is incremental: more independent measurements. careful cross-checking. and methods that expose the dominant sources of error rather than averaging them away.. Misryoum’s takeaway is that NIST’s update doesn’t “fix” Big G on its own—but it reduces uncertainty about what does and doesn’t work. and it keeps the long-running puzzle moving from speculation toward clarification.

For now, Big G remains measurable, but not yet settled. Each new attempt adds a data point—and occasionally, it reveals where the hidden difficulties really live.