AI text “looks human” — and the reputational cost surprises everyone

AI disclosure – New experiments suggest most people don’t suspect AI in personal messages by default. But admitting AI can still trigger a backlash.

Personal messages are supposed to reveal character. Yet new research suggests many people can’t tell when a text was written by AI—and the consequences may be bigger than most users expect.

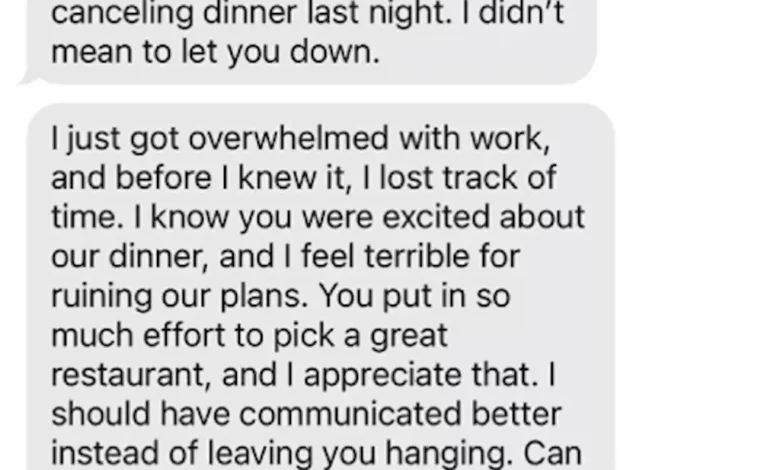

Researchers behind two experiments recruited more than 1,300 people in the U.S.. between ages 18 and 84, then tested how participants judged the sender based on authorship cues.. The core setup was simple: participants read AI-generated messages (including a fictional apology) and were told one of several things about who wrote them—or they were told nothing at all.. In the “nothing disclosed” condition, the text appeared just like an ordinary personal message.

The key result was an “AI disclosure penalty.” When participants were told the message was definitely AI-generated. they rated the sender more negatively—described as lazier. less sincere. and making less effort—than when they believed the same message came from a human.. In other words, disclosure mattered.. A sender who openly admitted using AI took a reputational hit.

People don’t assume AI by default

The surprising part is what happened when no authorship information was provided.. Participants formed impressions that were essentially as positive as those who were told the message was genuinely human.. They didn’t automatically treat the message as “suspicious” just because it sounded polished or personal.

That finding suggests a gap between what people fear about AI and what they actually detect in daily life. Most recipients appear to default to the assumption that a text message—especially one that reads smoothly and includes emotional language—reflects a real person’s effort.

Missing from the research is the kind of paranoia that often shows up in high-stakes environments. In casual settings like friendships, dating, and everyday workplace communication, skepticism may simply never get triggered.

Your effort signals may be easier to fake than you think

Human relationships often run on tiny cues.. A written apology. a thoughtful check-in. or the decision to reply quickly can all be interpreted as evidence of sincerity. competence. and respect.. When AI can mimic those cues closely enough to pass for human, the “effort signal” loses part of its meaning.

Misryoum readers can feel the practical impact in common moments: an apology that arrives instantly. a supportive message that sounds unusually perfect. or a breakup text that feels rehearsed.. If people don’t naturally suspect AI, then the emotional interpretation might lean more on tone than on truth.

Over time, that can reshape expectations. If recipients treat writing as a weaker indicator of authenticity, they may shift toward other signals—calls, voice notes, in-person conversations, or “high-friction” communication that’s harder to outsource.

Why admitting AI can backfire

The reputational penalty described in the experiments creates a paradox. People appear willing to accept AI-generated messages when they think a human wrote them. But once AI use is disclosed, trust declines.

Even more striking: the study found little effect from participants’ own familiarity with generative AI.. Heavy users—those employing AI frequently—penalized AI disclosure slightly less than nonusers. but they still didn’t become more skeptical by default when authorship wasn’t disclosed.. In short, “using AI yourself” didn’t automatically make you a better detector.

That matters because it changes incentives. If recipients don’t suspect AI unless told, then people who use AI secretly may face less social risk. Meanwhile, people who are transparent may incur a credibility cost even if the message quality is strong.

What this could mean for jobs, dating, and trust

The research connects to a broader trend that Misryoum has been tracking: organizations and individuals are recalibrating how they assess effort and legitimacy in an AI-saturated world.. In job hunting. for example. some employers have already begun discounting the value of cover letters—treating them as easier to generate than to evidence.. As scrutiny increases, evaluators lean more on references, recommendations, and real-time interactions.

Personal life may follow a similar logic. If texts become unreliable markers of effort, people may place greater weight on behaviors that show time, risk, and spontaneity—things harder for AI to fully simulate.

Where suspicion may eventually grow

The experiments don’t imply that people never detect AI in the real world.. Context likely changes everything.. In academic settings, where originality and authorship matter, suspicion may be more automatic.. Misryoum expects future research to explore the “switch” that flips recipients from trust to doubt: cues like repeated phrasing patterns. unrealistic specificity. or mismatches between message tone and a person’s typical communication style.

For now. one practical takeaway emerges from the data: recipients may judge sincerity based on writing quality more than on authorship.. If your goal is to be read as heartfelt. the safest routes may be the communication formats that are harder to outsource—phone calls. voice messages. and face-to-face conversations.

In a world where AI-generated text can feel indistinguishable from human effort, trust may hinge less on what’s written and more on how it’s delivered—and whether people feel safe assuming it was genuine.