AI startup switched from ChatGPT to Claude—why it canceled the plan

Claude vs – A New York AI startup canceled its ChatGPT subscription and leaned into Claude, citing faster workflows, better nuance, and more human-like writing—while still battling occasional bugs and hallucinations.

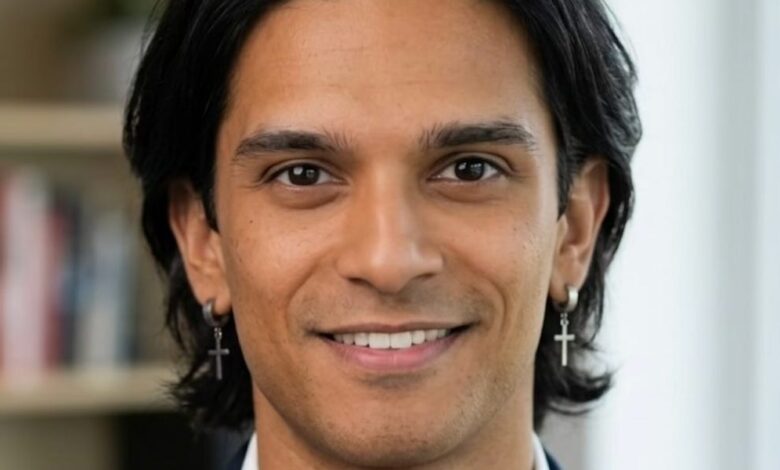

Sidhant Bendre, a 26-year-old cofounder of New York-based Oleve, says his company canceled its ChatGPT plan months ago and moved toward Claude instead—after a shift he describes as “pulled, not pushed.”

For Oleve, AI isn’t an experiment bolted onto day-to-day work; it’s built into the operating rhythm.. Bendre says the team has relied on models across coding, marketing, and even hiring workflows.. In that environment. the choice of model isn’t about hype—it’s about whether the tool consistently reduces the time spent revising work and redoing tasks.

His first takeaway from switching to Claude is straightforward: the output feels more usable.. “We weren’t pushed away from ChatGPT; we were pulled into Claude. ” Bendre explains. pointing to a practical difference in day-to-day execution.. After Anthropic released its Claude 4.5 model suite last fall, Oleve saw fewer bugs when generating code.. Even without major frustrations with ChatGPT. Bendre says the company kept noticing a specific advantage: they spent less time correcting the model and more time moving forward.

That time-saving effect matters because software teams don’t just need speed—they need speed that holds up under iteration.. In early-stage and product-heavy environments, a model that generates plausible results but requires frequent cleanup can quietly become a drag.. Bendre frames Claude as the kind of assistance that helps compress the workflow. allowing the team to iterate faster instead of cycling between drafts and fixes.

More “human” writing, less forcing

Beyond coding, Bendre says Claude changed how his team experienced AI-generated text.. When Oleve used ChatGPT more heavily. he noticed a pattern: responses sometimes didn’t sound human in a way he could feel immediately.. He points to what he describes as overly verbose writing and a tendency to rely on emojis in ways he wouldn’t.

For consumer software teams. marketing copy and internal documents still need to read like they came from a person who understands tone.. Bendre says Claude was better at mimicking human writing, and he connects that to how the model handles style.. He also notes that prior to Claude’s 4.5 release. students had been discussing how it could replicate writing styles after being shown examples—something Oleve later leaned on as it expanded AI into more parts of its operations.

Nuance is the deciding factor

The most meaningful difference. according to Bendre. isn’t just language style or raw output quality—it’s context and nuance.. He describes Claude as understanding when a request needs to be concise versus when it needs supporting detail.. In his experience. if he feeds Claude a larger research document but asks for a smaller specific output. the model more consistently matches the level of detail he’s actually seeking.

By contrast. he says ChatGPT at times felt like it was “overcompensating” when deciding what to include. generating too much content for the intended scope.. That can create a workflow tax: instead of refining a draft once. teams may need to prompt again and again just to reduce verbosity or redirect focus.. For Oleve, that extra prompting wasn’t just annoying—it interrupted the momentum the company was trying to build.

Bendre also ties the decision to how Oleve structured its AI usage.. As the company integrated AI into more workflows after switching. it made more sense to invest in model-driven automation when the team believed the payoff would persist.. He says Claude helped with development time by enabling automation using blueprints the team already had. shifting attention toward the product rather than the building blocks.

The trade-offs: bugginess, hallucinations, and trust

Even as Oleve prefers Claude, Bendre stresses that it’s not flawless. He says Claude has introduced its own issues, including messages or chats that appear to disappear. For a startup, missing context can be especially costly, because it disrupts continuity across teams and iterations.

He also points to the ongoing challenge of hallucinations—instances where a model produces output that looks confident but isn’t reliable.. Bendre says he’s still seeing these errors occasionally. and that the need to “push back” to self-correct can undermine confidence.. Even when web search is involved, he frames accuracy as something that still requires active judgment rather than blind trust.

What this reveals about AI adoption in business

Bendre’s story captures a broader reality for companies using AI: switching models is rarely about finding a “perfect” tool. It’s about finding the one that reduces friction in your specific workflow—coding, writing, summarization, and decision support each put different demands on a model.

His emphasis on nuance suggests a business reason behind the switch: executives and product teams value outputs that match intent the first time.. A model that over-explains or misses what matters can generate extra work, even if the content is technically correct.. Conversely. a model that reliably sizes its output—context when needed. brevity when asked—can create compounding efficiency across a team.

At the same time. the reliability gaps he mentions—disappearing chats and intermittent hallucinations—are reminders that operational maturity still matters.. Teams adopting AI at scale have to build review habits, verification steps, and workflow guardrails.. For Oleve, the decision to keep using Claude seems less like a loyalty pledge and more like an ongoing evaluation.

Bendre says he isn’t locked into Claude.. If another model suite launches products that genuinely improve his day-to-day value, he would try it.. For now. his bottom line is that Claude feels closer to the promise of AI: taking away busywork and giving people back time to think at a higher level.. In the startup world, that difference—measured in fewer revisions and faster iteration—often matters more than any single benchmark.