AI in Schools for Teen Mental Health: Hope or Risk?

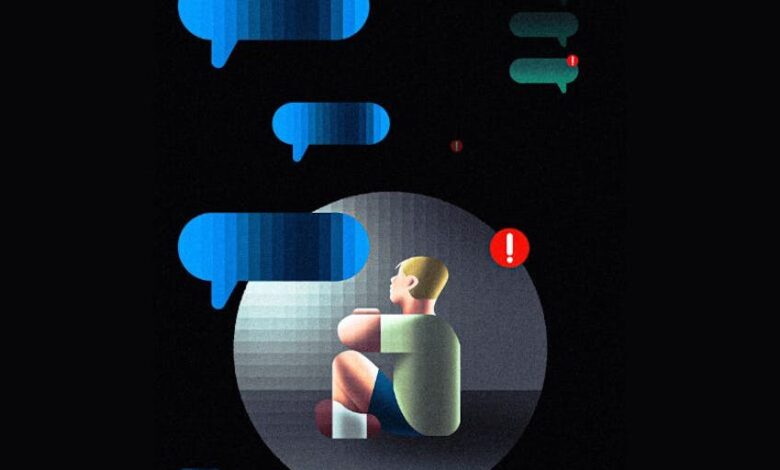

AI mental – Counselors are using AI chat tools to spot risk and support students between appointments. The debate now turns on privacy, screen time, and whether AI can ever replace human care.

At about 7 p.m., a counselor in Florida opened her phone and saw a warning tied to a student’s AI chat—an alert that could mean danger, but also might be a test.

The case captures a fast-moving education question that Misryoum sees gaining attention worldwide: when teens already feel comfortable confiding in AI. should schools use these tools for mental health support?. In Putnam County, Interlachen Jr.-Sr.. High School has used an AI-enabled monitoring and support platform for several years. framing it as a way to “vet” students’ mental health needs in the gaps when human staff are unavailable.

Misryoum: AI in schools for teen mental health is no longer hypothetical.. It is already part of day-to-day counseling workflows—flagging “severe” risks during nonschool hours and prompting counselors to contact families. assess vulnerability. and. in extreme cases. involve emergency services.. The counselor at the center of the story described how an alert led her to call a parent and follow up with additional probing. then later learned the student was safe and continuing school.

Behind the headlines, the appeal is practical.. Many districts face budget shortfalls and limited mental health staff, especially in communities where appointments can be hard to schedule.. Companies marketing these systems argue they do more than triage: they combine check-ins. social-emotional skill-building chat features. and clinician oversight—turning AI into an “early warning” layer rather than a standalone therapist.

But educators and families also worry about what happens when a chatbot becomes emotionally close.. A notable thread in the debate is the possibility of parasocial attachment. where students form a one-sided bond with an artificial conversational partner—especially if the system offers empathy-like language at moments of stress.. Some advocates argue that tools should avoid cues that encourage emotional dependence. such as phrasing that implies the bot has feelings.. Others point to screening risk: if schools rely on AI for serious cases. students may see fewer face-to-face interactions with clinicians.

To understand why teens might open up to AI, Misryoum looks beyond technology and toward teen psychology.. Counselors describe how talking to adults can feel intimidating for adolescents. and chat interfaces can reduce the pressure of face-to-face judgment.. For many young people—raised around text, social media, and instant messaging—an AI chat can feel familiar and low-stakes.. It also allows students to avoid the emotional “heat” of in-person conversations. including the fear that facial expressions might reveal disapproval.

That familiarity is a double-edged sword.. Clinical professionals emphasize that AI cannot replicate the full human context of care: tone of voice. body language. subtle behavior changes. and the discernment that comes from trained listening.. AI may catch patterns in what students type. but it cannot fully interpret what is unsaid—or the complex circumstances behind a crisis.. In other words. AI might be good at detecting signals. yet still be limited at making the kind of judgment schools most need.

Privacy adds another layer of complexity.. Experts caution that chatbot conversations may not offer the same privacy protections as sessions with licensed therapists. and they raise concerns about what happens when flagged messages lead to police involvement.. Even when clinicians supervise the system and human staff respond appropriately. the legal and ethical “messiness” of monitoring a minor’s off-campus text still makes families uneasy.. This is where policy decisions—and transparency—can matter as much as the algorithm itself.

There is also a governance issue inside the data.. The Florida counselor described that AI alerts can include false alarms or boundary testing by students.. For example. students may enter provocative statements as a way to see whether adults will respond—sometimes joking. sometimes seeking attention.. In practice, that means the system cannot be treated as a single-source truth.. It becomes a trigger for human follow-up. where counselors assess remorse. body language. and the context of the student’s behavior before deciding next steps.

Misryoum believes this is the central editorial tension: AI tools are currently being positioned as “first responders” in schools. but they still require human oversight to interpret intent.. The difference between a warning that saves time and a warning that replaces judgment is not only technical—it is cultural.. If students learn that adults are actually watching, intervening, and caring, trust may grow.. If they learn the opposite, AI could become another surveillance layer that damages relationships.

Across the broader conversation, the educational risk is not only clinical; it is social.. Advocates warn that if students retreat into bots during loneliness—especially during a period already marked by isolation and reduced community engagement—schools may unintentionally weaken the habits of real human support.. In this view, AI should be regulated so it supports pathways to people, not shortcuts away from them.

At the same time, supporters argue that AI can normalize mental health support for students who otherwise avoid it.. A student adviser connected to the platform described the tool as a bridge toward adult help. making social-emotional learning feel less like a crisis moment and more like a routine check-in.

For schools deciding whether to expand or scale these systems. Misryoum sees a clear set of priorities emerging from the debate: protect student privacy. require clinician involvement. ensure AI does not become a substitute for human connection. and measure outcomes beyond immediate risk flags.. If these tools can reduce the delay between distress and care—without pushing students into emotional dependence—then they may find a place in the mental health ecosystem.. If not. the costs could show up later: fewer conversations with trained professionals. weaker trust. and a school culture that mistakes automation for understanding.

Data intelligence in education: making decisions from trusted data

Food recovery clubs fight food insecurity and waste on California campuses

Schools Still Struggle: What Students and Educators Say Must Change