AI coding for kids: 5-year-old builds a game—and the rules matter

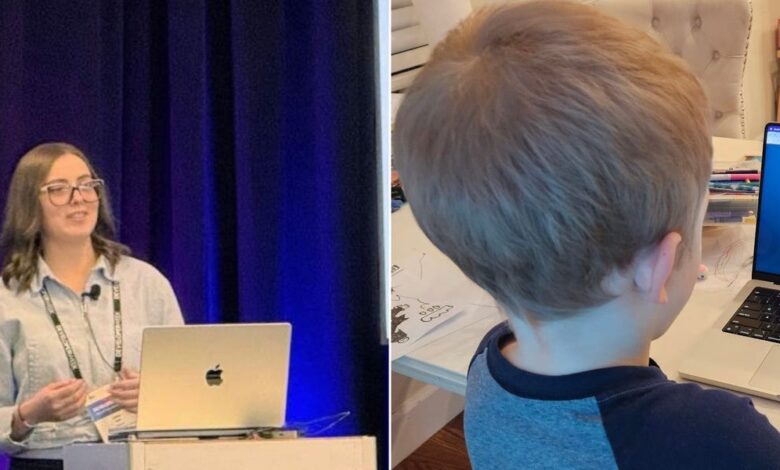

AI coding – A parent and AI leader describes how a 5-year-old used a voice-based coding tool to build a game—while keeping tight privacy, time, and thinking boundaries.

A five-year-old waking up wanting to build a game is already a story about curiosity. Add AI to the mix, and the real question becomes how to guide the tool without outsourcing the thinking.

In one at-home experiment shared by Misryoum. the parent—also an AI and developer-strategy executive—let her child try a voice-based coding app with a simple goal: turn the child’s idea into a playable browser game.. The spark was “vibe coding,” using natural prompts instead of technical vocabulary.. The child’s excitement kicked in fast. not after a lesson. but in the moment the sound-to-code workflow made the computer feel responsive.

The child began by sketching levels and characters on paper, then moved to the app.. Instead of starting with “functions” or “syntax. ” the child described game mechanics: typing a word to transform a player into a character. with characters jumping to collect coins.. The tool generated something workable, though not exactly as imagined—so the next step was iterative refinement.. The child asked for more specific behavior and character naming. and the adult role stayed deliberately light: explain what the tool can do. ask clarifying questions. and help the child translate intentions into prompts.

That process is more than a cute milestone.. For families and educators watching AI move from novelty to mainstream learning. the pattern matters: the learning came from play. not from a formal curriculum.. The child naturally decomposed the project into parts—characters, movement, and rewards—an early form of structured thinking.. The experience also surfaced an often-ignored skill: persistence.. When the first result wasn’t perfect, the child learned to respond to feedback by trying again with clearer instructions.. Those are habits that will matter long after any single app is forgotten.

There’s also a practical reality behind the excitement.. Voice-based coding can lower the barrier to entry. making creative projects feel accessible in a way traditional programming tutorials rarely do—especially for very young learners.. But Misryoum’s takeaway isn’t “let kids code unsupervised.” The real value is in the guardrails: ensuring AI acts as an assistant that amplifies a child’s intent rather than a system that replaces the messy. valuable struggle of coming up with ideas.

The parent described one guiding principle repeatedly: AI should never replace thinking.. In age-appropriate terms. that means the child should still make the choices—what the game should do. how it should feel. what should be improved after seeing the output.. The adult is there to keep the child in charge of the creative direction while treating the AI like a tool with limits.. It’s a subtle but important distinction.. Offloading creativity and decision-making to a machine might produce a faster result. but it also risks shrinking the learning that comes from uncertainty. iteration. and personal ownership.

Guardrails also extend to how children understand what AI is.. Misryoum’s account emphasizes that children can easily attribute human qualities to systems that respond conversationally.. That emotional instinct isn’t “wrong”—it’s normal development—but it can cause confusion about authority, accuracy, and intent.. The approach here was simple: clarify that AI is a tool built by people, not a friend or an authority.. For parents. that framing can reduce the chance that a child will treat the AI’s outputs as truth rather than suggestions.

Privacy and screen-time boundaries are the other half of the safety equation.. Before starting. the family reviewed basic rules: no personal information shared with apps—no real names. addresses. school details. or family specifics.. The session also stayed short. under twenty minutes. with more time spent drawing the game on paper than building it on-screen.. That time discipline matters because “educational” can become a label that hides risk.. In practice, younger children need routines that keep the experience light, supervised, and tied back to offline creativity.

For the broader economy and industry. this type of household use points to a bigger shift underway: AI is becoming a mainstream input method. not just a back-office technology.. When families try AI in everyday creative tasks. demand grows for safer design—privacy controls. child-friendly defaults. and interfaces that encourage learning through questioning rather than passive consumption.. If developers want these tools to earn trust, they’ll need more than clever prompts.. They’ll need transparent guardrails that reflect how children think and how parents manage risk.

Looking ahead, the next phase for AI learning won’t just be about capability.. It will be about stewardship: how products help adults set boundaries. how children practice independent thought. and how creative momentum translates into durable skills.. Misryoum’s example suggests that when guardrails are clear—thinking stays with the child. personal data stays private. and time stays contained—AI can be less of a shortcut and more of a catalyst.. The result isn’t only a game.. It’s a mindset: try, adjust, and build again.