AI CEOs’ job-loss warnings: the real investor logic

AI job-loss – When AI lab CEOs talk about massive job displacement, the message often lands as strategy for investors and corporate buyers—while the public hears fear, timing, and risk.

AI CEOs have become unusually direct about the downside of their own technology.

In recent months. public remarks from leaders at major AI companies have repeatedly returned to one theme: widespread job disruption is likely.. The focus_keyphrase is “AI job-loss warnings. ” and it’s driving a deeper question beneath the sound bites—who are these executives really trying to convince. and what outcome are they working toward?

OpenAI CEO Sam Altman has suggested that the effects of AI performing work tasks could become noticeable within a few years.. Anthropic CEO Dario Amodei has been more blunt. warning that entry-level white-collar roles could be hit significantly in a short window.. DeepMind CEO Demis Hassabis frames the shift as rapid and transformative. comparing its potential economic impact to a faster version of the Industrial Revolution.. Meanwhile, Meta’s CEO Mark Zuckerberg has paired rhetoric with action, confirming layoffs and reallocating savings toward large-scale AI infrastructure.

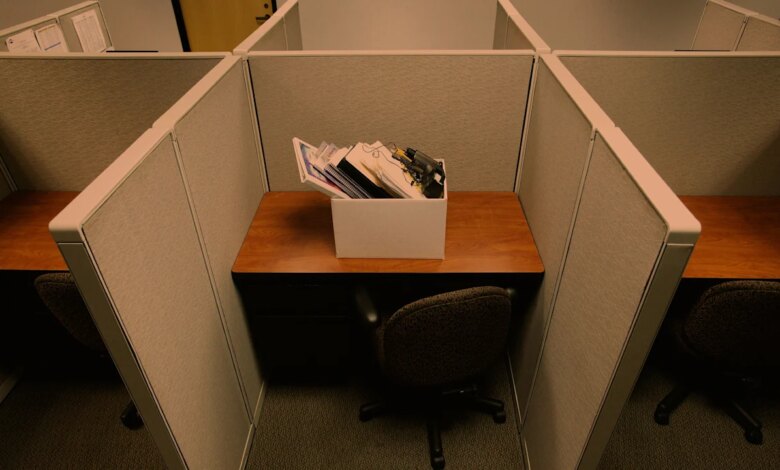

To many observers, these statements can feel like an unsettling contradiction.. People hear the warnings and naturally connect them to their own job security, education pathways, and community stability.. Public sentiment often follows that emotional logic. including concerns about when displacement might arrive and whether the benefits will be shared widely enough.. That gap between how AI leaders talk and how the public receives the message helps explain why trust in AI executives can be so fragile.

The most revealing angle is that the “warning” often functions as a signal rather than a threat.. Misryoum readers may notice a pattern in how these CEOs communicate: they underline that generative AI can take over tasks. boost productivity. and reshape organizational workflows.. In the eyes of investors and capital markets. that narrative supports a simple investment thesis—own exposure to the builders of the productivity layer.. If large portions of corporate work can be automated or restructured. the companies funding data centers. training models. and integrating AI into business systems may be positioned for outsized growth.

Misryoum analysis suggests the CEOs understand this split audience better than critics sometimes assume.. Investors may hear “job loss” language as a prelude to efficiency gains. new product categories. and durable demand for AI infrastructure.. That framing can also keep funding momentum strong when markets are hungry for proof that AI is more than a prototype.. In practice. the narrative reinforces the belief that the productivity revolution is approaching—sometimes sooner than traditional corporate transformation cycles would predict.

At the same time, the public conversation is often less about productivity and more about consequence.. Many people worry about timing—how quickly layoffs will spread beyond early adopters into entire job families.. Others fear misuse: the same automation capability that speeds up business operations can also enable surveillance, disinformation campaigns, and cybercrime.. Yet. the industry tends to address those concerns indirectly. through policy discussions. partnerships. and engagement with influential stakeholders. rather than speaking to everyday audiences in a way that feels personally accountable.

This difference in communication style matters because it shapes political and social friction.. If AI is perceived as coming for jobs without a visible plan for protection. compensation. or alignment with public values. backlash becomes easier to organize.. That’s especially true when AI infrastructure is tangible and local—data centers, power use, land development, and workforce impacts.. When communities push back against the physical footprint of AI buildouts. the debate can quickly shift from abstract labor fears to concrete political leverage.

Misryoum also sees why the issue is hard for companies to fully control: AI adoption isn’t just a technical question.. Even if a capability exists on paper—say, automating 90% of a task—real organizations move more slowly.. Workflow redesign, governance, risk management, training, and compliance all introduce friction.. This means displacement can lag behind the underlying technology timeline, making the “how soon” debate emotionally and politically charged.

For the AI industry, the job-loss warnings may therefore be doing double duty.. They prepare business buyers for structural change. and they reinforce to capital markets that demand is coming in a measurable way.. For society. the same words can act like a signal of upheaval—especially when there’s no clear. shared roadmap for who benefits next. how workers transition. and what safeguards prevent abuse.

The next phase will likely be defined by whether AI companies can narrow this gap: translating productivity claims into transparent plans for labor transition. communicating safety and misuse mitigation more directly. and addressing the community impact of the infrastructure that powers their models.. If that doesn’t happen. Misryoum expects the AI job-loss conversation to keep evolving—less as a technical forecast. more as a defining political and economic fault line.