DeepSeek V4 on NVIDIA Blackwell: 1M Context Comes to Life

1M-token context – DeepSeek-V4-Pro and V4-Flash promise million-token context for faster, more efficient agentic AI—accelerated on NVIDIA Blackwell endpoints.

DeepSeek has rolled out its fourth-generation flagship models, and the headline feature isn’t just “bigger” or “smarter”—it’s the ability to run with up to a 1M-token context window.

That shift is quickly turning long-context AI from an impressive demo into an engineering requirement.. And in the latest development. Misryoum is seeing the story converge around two forces: DeepSeek’s new long-context architecture and NVIDIA Blackwell-backed infrastructure meant to make million-token inference practical.

What’s new in DeepSeek V4 (Pro and Flash)

Both models support up to a 1M-token context length. which matters because longer contexts are what let systems track more than a single prompt-response pair.. In agentic setups. the “conversation” can include system instructions. tool outputs. retrieved documents. code fragments. logs. and intermediate reasoning traces—often accumulating across many steps.

Here’s the practical impact Misryoum readers will recognize: if your AI system can retain more of the work in one go, you spend less time juggling partial context windows and more time producing coherent multi-step results.

Why million-token inference is hard—and what V4 changes

DeepSeek’s V4 family addresses this with architectural changes centered on optimizing the attention component.. Misryoum’s takeaway is that these improvements are aimed at token-level efficiency and memory pressure, not just benchmark scoring.. The reported design goal is a 73% reduction in per-token inference FLOPs and a 90% reduction in KV cache memory burden compared with the prior generation.

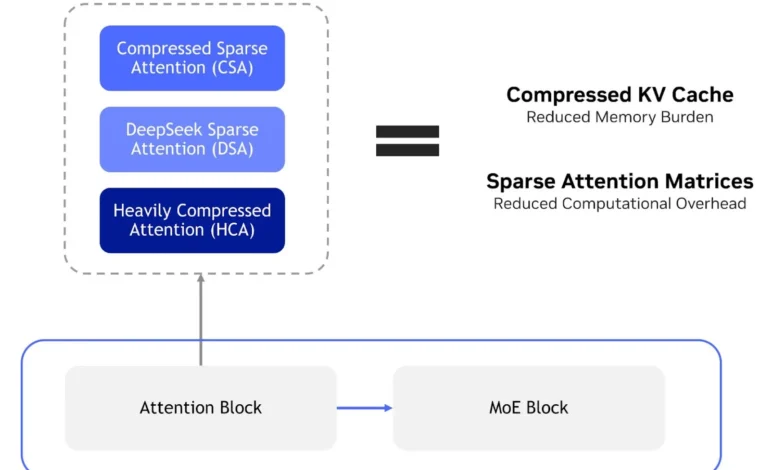

The mechanism behind that claim is a hybrid attention approach combining:

– Compressed Sparse Attention (CSA). which dynamically compresses KV entries to reduce KV cache footprint. then applies sparse attention to cut computation.. – DeepSeek Sparse Attention (DSA), which sparsifies attention matrices to lower overhead.. – Heavily Compressed Attention (HCA), which consolidates KV entries across sets of tokens into compressed representations for further KV cache reduction.

For developers. the real-world consequence is economic: as open models approach frontier capabilities. the differentiator increasingly shifts from “which model is best” to “which model can be deployed at the lowest token cost.” When long-context agents become common. infrastructure efficiency starts to look like a product feature. not a backend detail.

How NVIDIA Blackwell fits into the same long-context push

In the provided performance framing, out-of-the-box testing on NVIDIA GB200 NVL72 for DeepSeek-V4-Pro shows over 150 tokens/sec/user. NVIDIA also describes broader evaluation using vLLM’s Day 0 Blackwell recipe to capture baseline performance characteristics across configurations.

While exact numbers vary by workload and settings. the underlying message is consistent: long-context inference needs systems designed for throughput and memory efficiency. not just raw model size.. Blackwell is presented as built for that. with Misryoum viewing it as part of a broader trend—AI compute platforms increasingly market “deployment readiness” alongside training capabilities.

Building with GPU-accelerated endpoints (faster prototyping)

NVIDIA’s GPU-accelerated endpoints. available through build.nvidia.com as part of its developer program. are framed as a way to prototype with DeepSeek V4 before moving to self-hosted deployment.. That matters for agentic applications because iteration speed determines whether prototypes reach production-ready reliability.

DeepSeek V4 is also described as available via NVIDIA NIM for day-0 deployment.. In other words. the pathway is designed to reduce friction between model availability and application integration—particularly for long-context coding. document analysis. and agent workflows that rely on familiar API patterns.

Serving options: SGLang and vLLM for different performance goals

SGLang is described with three primary serving recipes tuned for different latency/throughput profiles—low-latency. balanced. and max-throughput—plus specialized setups for long-context workloads and prefill/decode disaggregation.. Prefill/decode separation is important because long contexts stress prefill work (processing the prompt) and then decode work (generating tokens). and optimizing both phases can change the perceived responsiveness of an agent.

vLLM is positioned as another common path, offering single-node and multinode serving recipes on Blackwell and Hopper. It includes multinode prefill/decode disaggregation recipes scaling to large GPU counts, with support for tool calling, reasoning, and speculative decoding.

The bigger story: agents, memory, and inference economics

When agents store more state, the software stack has to handle more than text generation.. It has to manage memory footprints. routing decisions. tool outputs. and multi-step coherence—all while keeping cost and latency within tolerable bounds.. That’s why V4’s attention and KV-cache innovations are being paired with infrastructure designed for long-context throughput.

Open models are reaching capabilities that make agent workflows feel less like experiments and more like deployable products.. The challenge for enterprises and builders isn’t only model selection anymore—it’s building an infrastructure strategy that can scale million-token interactions without ballooning operational cost.

For teams evaluating what comes next, DeepSeek V4 is a clear signal: the “future of AI” isn’t just bigger contexts—it’s efficient, infrastructure-aware long-context inference that can support agents in the real world.